The Debrief

EHR emoji | ChatGPT for hospitals | Wellness panic

Sharing a handful of interesting/important things with my thoughts.

Editorial note: It came to my attention that I might have fetishized mdGPTs in this week’s post, The Cognitive Prosthetic. So in the next couple weeks I’ll self-counter with something covering my concerns around these new tools. Stay tuned.

OpenAI’s quiet release of ChatGPT for Healthcare

While last week’s Debrief I shared OpenAI’s release of ChatGPT Health, but I missed their stealthy drop of ChatGPT for Healthcare. ChatGPT Health, as you recall, is the consumer end of ChatGPT and it allows folks to upload records, connect to wellness apps, etc.

ChatGPT for Healthcare. This is OpenAI’s bid to become the working layer between doctors and the rest of the hospital info system. It’s tidied up for administrators and IT types with enterprise controls, data retention controls, and business agreements to support HIPAA workflows.

The reason I missed it is because they didn’t publicize it heavily. (Almost simultaneously, Anthropic announced a version of Claude similarly tailored to enterprise healthcare users.)

Torch acquisition. And the plot thickens — and this is important. OpenAI acquired Torch (not Torchy’s, the taco chain), a small health-tech company designed to turn EHR mayhem (labs, meds, visit recordings) into something usable.

So while OpenAI builds the polished doctor interface, Epic clunks on below as the information engine.

🔺 What’s important isn’t what OpenAI is doing with any one of these products, it’s their intent. These are things you do when you want to penetrate the healthcare workspace. Beyond trying to be the layer between you and Epic/Cerner, this is a defensive move against commodification. Pretty interesting to think where this could go.

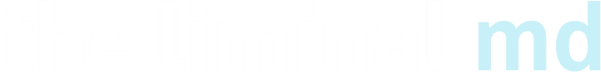

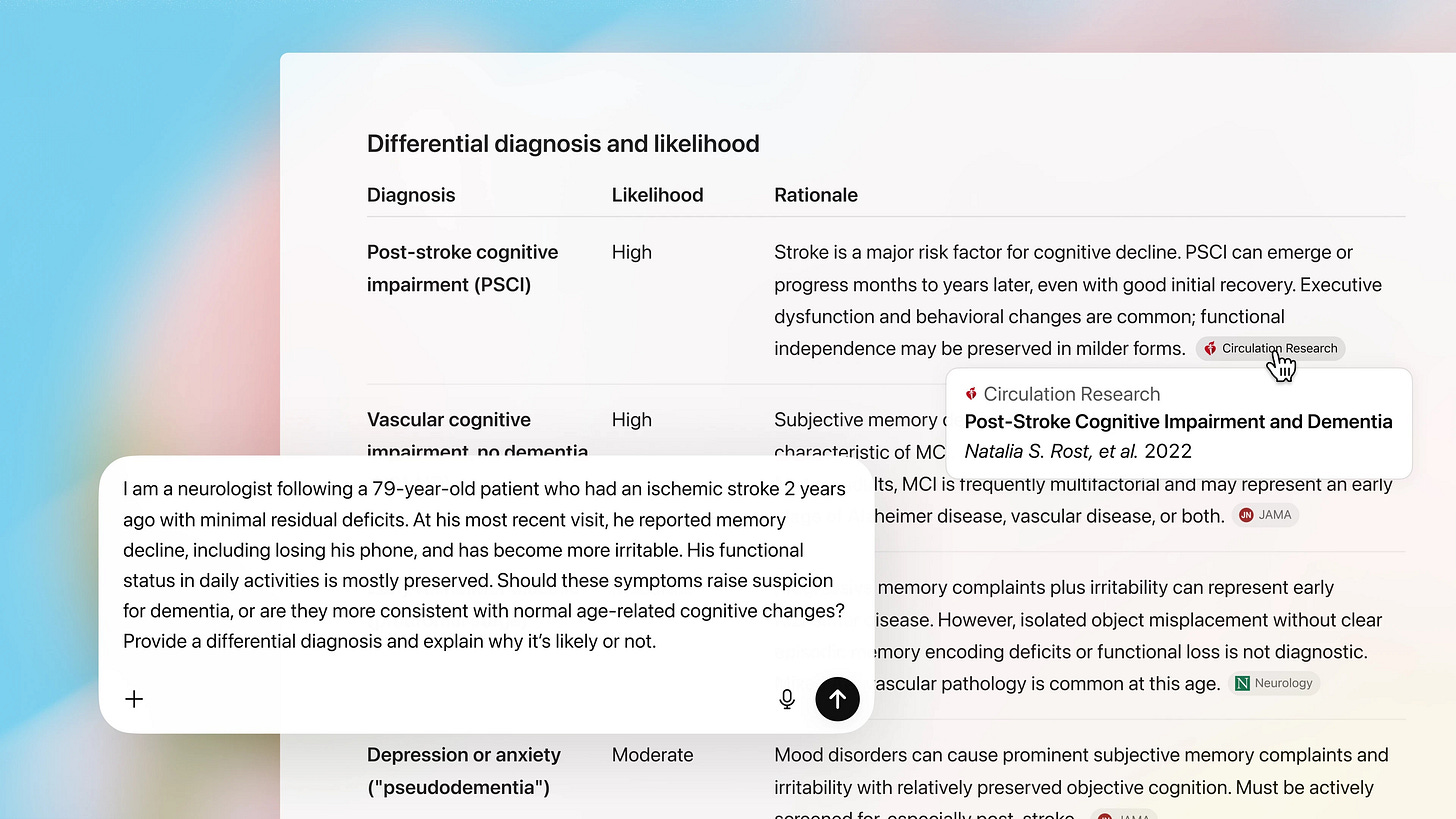

OpenEvidence games

This is clever. OpenEvidence this week started offering a game feature, Synapses, on their platform. It’s a kind of matching game. I tried one in cardiology but I don’t know anything about hearts. When I was adding links this morning I could only find it on the mobile app under ‘Discovery’ — So start there if you want to check it out. I’ll add that they need a Leader Board — Docs are competitive 🥇.

🔺 This makes sense. OpenEvidence has to continue to position itself broadly along its axis of information | knowledge | wisdom. This feature aligns with that core.

Emoji in medical records

In a study published this week in JAMA Network Open, researchers found that between 2020–2024, fewer than two notes per 100,000 included emoji. But by late 2025, emoji showed up in 10+ notes per 100,000.

Michigan Medicine (where the study originated) prohibits emoji in the medical record. It’s worth noting that most emoji were in portal messages to patients, telephone encounters, and encounter summaries.

The problem is the record serves competing interests including legal, compliance, the patient, and the doctor. Ask any of these folks what the MR is for and you’ll get different answers. Ask them what 🙂 means and you’ll definitely get different answers.

It seems the issue centers on what a patient understands when they encounter an emoji, which is fair.

🔺 I’ll call emoji defensible in portal messages where they’re purely social and the message is crystal clear without it. They’re probably questionable in core clinical documentation meant for other clinicians (progress notes, assessments). Here’s the best litmus IMHO: If you delete the emoji and the message becomes even slightly unclear, you shouldn’t use it.

And I’ll die on this hill: If a hospital system wants to ban emoji in the name of clarity, fine. But then it should bring the same energy to medical jargon, templated filler, and other forms of medical slop.

The wellness industrial complex | What’s old is new again

Ryan McCormick, M.D. is one of Substack’s great medical thinkers. His recent Examined post on wellness panic and its corollaries with an earlier time is worth a read.

America is spiraling into a wellness panic—again. We’re microdosing, biohacking, cold-plunging our way toward a mythical immortality while measles cases explode and snake oil salesmen occupy the highest offices in the land. Sound familiar? It should. We lived through this exact fever dream 150 years ago, when rapid industrialization shattered society, inequality reached grotesque extremes, and terrified Americans turned to diet gurus and patent medicine hucksters promising control over bodies if not lives. The names have changed, but the con remains.

I’ll see y’all on Wednesday. I’m planning to share an interesting dilemma involving AirPods/headphones and doctors. I’d love to know what you think when it lands.

Somehow I can’t see myself ever intentionally using a naked ChatGPT interface for much of anything, especially, however, not for medicine. I’ll have to look at the Claude version, as I’ve been using Claude for some other things successfully, but my go to LLM for medical work remains Open Evidence. Thanks for the summary!

Can any of these AI healthcare products ever enter the EMR space directly?